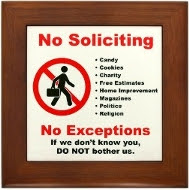

Despite insisting it is not a media company and is not in the business of making editorial judgments, Facebook is all too happy to censor user-generated content. Facebook censors operate under a cloak of anonymity, with no accountability to users.

Facebook Auto Censorship

Have you ever tried to post a comment and got a message saying, "Unable to post comment - try again?"

Or "An error occurred. Please Try again in a few minutes"?

And no matter how long you wait, you keep getting the same error?

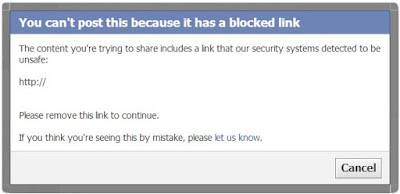

Have you ever had a Facebook post rejected because of an "unsafe link"?

The link could be a web page or an image that Facebook decides is "unsafe."

Have you ever had a Facebook post rejected because Facebook decided your comment "seems irrelevant or inappropriate"?

The primary way Facebook flags and takes down content is via automated algorithms. As with any automated system examining content, there will always be false positives; innocent text or images that trigger the censor. The problem is that Facebook censors too much innocent content and makes it impossible to reach a human in order to appeal their decision.

So if you see, "If you think you are seeing this by mistake, please let us know" keep in mind that no matter what you write, no human will read it and reconsider. It's just a way to make YOU feel better about their censorship.

Facebook Censorship by Trolls

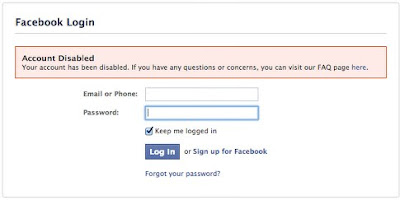

Have you ever been notified by Facebook that one of your posts has been removed?

Have you ever been notified by Facebook that you have been "Blocked From Posting?"

Then chances are your post "triggered" somebody who decided to retaliate by reporting you for violating "Facebook Community Standards"

With more than 1.7 billion people using the social network every month, Facebook can't monitor everything that passes through its site. The company does have teams of people around the globe devoted to policing Facebook, but those teams largely rely on its community of users to call out questionable behavior.

Facebook employees are completely overwhelmed by complaints from users, their guidelines for making determinations are murky at best and they have very little time in which to evaluate the validity of individual complaints. As a result, their default response is almost always to remove comments and suspend the commenters.

When users are suspended or have their content removed, there is often little explanation and they're left to figure out the reason why for themselves.

And the reporting system is ripe for abuse by cyber bullies. Not only can they maneuver you into posting a keyword they can then report you for, or go searching through your posts to find something innocuous that contains a keyword out of context, but they can gang up on their victims, reporting innocent comments often enough until the system sides with the reporter or a human reviewer makes an assumption in their favor.

Even mentioning someone else’s name in a post means that, if the comment is reported it can be viewed as violating community standards. Which is particularly ironic, since clicking on a "Reply" link below someone's comment causes the system to print their name on the reply before your answer. Other folks routinely use the individual’s name out of courtesy when replying. The name recognition is automated to the point that the person doing the reporting of abuse need only have an account with the same name or partial name as the name mentioned in the comment in order to report it as some kind of "personal attack" for purposes of silencing an opponent.

Facebook's policies enable bullies to target other users for harassment by falsely reporting their photos, their posts, or their account with impunity. The victim gets punished, and the abuser walks away consequence-free.

As often as not, one of the victim's so-called "friends" is the one doing the reporting and there is no way for the victim to discover who this person is in order to unfriend them and stop the harassment, nor is there any recourse for them. If they attempt to contact Facebook about the issue, they are ignored and sometimes even banned. They are simply helpless and at the mercy of a severely flawed system.

The entire system is so unpredictable and inconsistent, it’s difficult to determine how to censor yourself when you are being targeted by cyber bullies. The temporary bans just keep getting longer and longer, with no way to defend yourself against the onslaught until you stop posting completely or are permanently banned.

Facebook trolls delight in reporting people they do not like for trumped-up violations that often result in removal of individual posts or entire accounts. This is a very common practice, especially in political circles. If someone doesn't like you, or the comments you post, they can report you for spam or some other reason and tell their friends to do the same. The automatic systems will take your content down and send you a warning. If the complaints about you continue, Facebook will block you from posting and eventually they will disable your account. How long that takes and how many complaints it takes seems to vary quite a bit.

There is no channel of communication a victim of this cyber bullying can use to defend themselves. Targets have to wait out their bans and then retreat from the places where they were being victimized. In this manner, more than any other, Facebook fails to defend its users against cyber bullying.

Two days after suggesting that the United States reallocate portions of its military budget towards healthcare and education, God instantly felt the wrath of Facebook. The post received such a massive, vitriolic response, algorithms automatically banned God for 30 days.

"God" had no option but to wait it out. The "God" account frequently draws the ire of Facebook users who either view the profile as blasphemous to their religion or disparaging of their country because God often criticizes American imperialism. This suspension over what amounts to an uncomfortable truth delivered as satire is an example of Facebook's increasingly oppressive censorship.

False DMCA Claims

Because Facebook does not validate the identity of anyone submitting a DMCA takedown notice, nor does it check to see if the report was sent from a legitimate email address, anyone with an ax to grind can fill out a form with a bogus copyright complaint to get a Facebook page removed.

It's no wonder why Facebook is so quick to censor content some find objectionable and to remove potentially copyrighted material. It costs money to evaluate complaints. It's much easier and cheaper to ban first and ignore the victim's response, satisfying the complainer and at the same time giving a time out to someone others have identified as a trouble-maker.

The chilling effect these unfair actions have on free speech and debate can be very frustrating, but it can also be devastating for people who rely on Facebook to promote their business or to interact with their customers.

Ode to Reporting Asshats:

Here I sit in front of my screen

Looking for posts that I find mean

Oh my, is that a pic of a tit?

I must hurry up and report that shit

It's not my fault that I'm a desperate loner

Or that sexy pics give me a boner

In my ass, I carry a big stick

That turns me into a whiny, little prick

I'll always be here, watching your page

Acting like 12 instead of my age

I act like a friendly, happy fan

But I'm really hoping to get you a ban

I usually go by the name of troll

But also answer to the name asshole

🔻🔻🔻🔻🔻🔻🔻🔻🔻🔻🔻🔻🔻🔻🔻🔻